Agents

When AI goes from words to action

Lately it’s impossible to open LinkedIn, Twitter (sorry, Elon, I’ll never call it X), or our favorite social media without running into a post about AI agents.

You’re probably thinking that Claude or ChatGPT are agents, and that their marketing teams are just rebranding them from “assistants” to “agents” to make them sound cooler. Well no, this time it’s not just hype, it’s an actual shift in the technology.

And it’s not just any shift. We’re talking about an AI that’s starting to have goals and act on its own to achieve them. (Wasn’t that the start of every apocalyptic tech movie?)

Today I want to tell you what an agent is and how it’s different from a simple assistant like ChatGPT. The idea is that you can separate the hype from reality and, as a bonus, impress everyone at your next family dinner.

Let’s begin.

What is an AI agent?

When I think of an agent, the first thing that comes to mind is Agent Smith, from The Matrix. If you haven’t seen The Matrix... well, shame on you!

Please stop reading this immediately and I’ll see you in 2 hours and 16 minutes, with your brand new perspective on the world.

OK, now we can continue.

Agent Smith had one single mission: maintain order inside the simulation and make sure the connected humans never questioned reality or discovered the truth about the Matrix. To accomplish that goal, Smith spent four movies chasing Neo.

In the real world, where agents don’t wear sunglasses, we define them as:

An entity that has a goal and acts autonomously to achieve it.

OK, so that’s what an agent is. An AI that has a goal and works on its own, making decisions step by step until it gets the job done. The good news is that its goal (for now) isn’t to chase us down... has anyone here seen Terminator?

There’s a big difference between an assistant, like ChatGPT, and an agent. While you have to guide your assistant step by step and tell it exactly what to do, an agent figures out on its own how to reach its goal.

Agents don’t wait for step-by-step instructions, you just tell them what the goal is and they figure out how to get there. They make decisions and move forward on their own until the mission is complete.

If we want to get technical for a second, we could say that an agent is a system that perceives its environment, acts autonomously, uses tools, and pursues goals.

You might be thinking something like: “But Germán, my assistant can already search the Internet, use tools, and even write code... so isn’t it an agent?”

Great question! No. 😅

These capabilities are part of what makes an agent, but assistants still don’t have the autonomy to plan and execute without human intervention until they reach their goal. Assistants still need you to guide them step by step.

An agent has four key characteristics (besides the sunglasses). Let me walk you through them.

The 4 things that make an agent

We already know that the key word here is autonomy. Now let’s look at what one of these agents needs to achieve it.

There are four things that make an agent truly an agent (and not just someone hyping up their AI). Let’s go through them one by one.

1. It has a goal and doesn’t stop until it’s done

This is the main characteristic of an agent: it has a specific mission and will work until it completes it.

Sound familiar? That’s basically the plot of every apocalyptic sci-fi movie you’ve ever seen!

While your assistant answers random questions or carries on a conversation with you, an agent receives a mission and understands it has a goal to achieve. It’s not trying to give you an answer, an agent will do whatever it takes to complete their mission.

When you give an agent a goal, you don’t need to tell it what steps to take. It figures out on its own what needs to be done, the sub-goals it has to accomplish, and how each step brings it closer to its target.

Of course, the agent needs to know when it has completed the goal you set for it. So it keeps evaluating its progress toward the target until it recognizes it has reached judgment day “final state”.

2. Observe, think, act... repeat

OK, an agent doesn’t follow a fixed plan. The real world is a complex scenario and you need to be able to adapt and respond.

As every human knows, having a fixed plan and not being able to adjust along the way is a recipe for disaster. We are flexible and machines are not, so we need to find a way to give them that flexibility.

That’s why agents work with an action cycle:

Observe: assess the current situation.

Think: based on what it observed, decide what the next action should be.

Act: execute that action.

And then back to step 1. This repeats over and over until the goal is met. Notice how this cycle allows our agent to adapt to the situation at every step.

Just to be clear, this is not the same as an automation. In an automation you program the steps to follow, so if something changes, the automation will probably fail. Agents can evaluate the situation and adapt to it.

This doesn’t mean that automations are bad, not at all. Actually, I think they’re an essential tool. I just wanted you to know that an automation is not the same as an agent.

automation ≠ agent.

In short, an agent has the ability to iterate over and over, adapt, and adjust its plan on the fly.

3. It remembers

None of what I’ve told you so far works if an agent doesn’t have memory. Agents need to remember what they’ve been doing. They need to know what actions they’ve already taken, which ones worked (and which didn’t), and how they’re progressing toward their goal.

Can you imagine Terminator forgetting about finding Sarah Connor halfway through the movie? Or Agent Smith not being able to remember that Neo’s name in the Matrix is Mr. Anderson?

Without memory, every “Observe-Think-Act” cycle would start from scratch. Our agent would have no idea that what it’s trying has already been attempted three times before and never worked. The fact that it can remember is what makes this truly a cycle.

This memory is not the same as the one your ChatGPT has, for example. An agent is much more structured and keeps track of its progress toward its goal. If we want to get technical, this is called maintaining “state.”

Bottom line: if it doesn’t have memory, it’s not an agent.

4. It has tools

Don’t go thinking that AI can grab its toolbox and finish that project you’ve had half-done for two and a half years (you don’t have one? I have several 😅).

What I’m talking about here is that our beloved agent can interact with other systems. For example, search the internet, run code, query databases, read and create files, talk to other systems (in nerd speak, that’s called calling APIs), etc.

Having a goal, a cycle that helps you adapt, and memory is not enough. These tools are what allow the agent to work in the real world. What I mean is that tools are what actually give it the ability to “do things.”

Right now, assistants like ChatGPT or Claude already have access to tools. The big difference with an agent is that they decide when to use those tools without human intervention.

An important note: you should always keep in mind what tools these agents have access to. You don’t want to “accidentally” and “without realizing it” give them the ability to email your boss, buy whatever they want on the Internet, decide where to invest your retirement savings, or control your nuclear arsenal.

Anyone said Skynet?

Sorry for the pessimism, I think I’ve watched too many sci-fi movies. But seriously, I do believe the tools part requires human oversight.

The reality is that if an agent makes good use of these tools, or gives us a heads up and asks for permission before doing so, it would be extremely useful.

So, to sum it up:

Agent = Goals + Cycle + Memory + Tools.

Let's get our terms straight

OK, now that we’ve seen what an agent is, I imagine you’re thinking something like:

So what do we call everything else that uses AI?

A big part of this confusion comes from the fact that all most companies using AI want to say they have “agents.” Obviously, saying that sounds more pro, more cool, “más chévere” (as we say in Perú). You know what I mean.

Let me tell you that (if you have that question) I totally get it. In fact, I don’t think I have the definitive answer to that question, but I can try.

Here’s a quick rundown of what I understand by each of these terms (there’s no technical standardization that I know of, so you’re reading my opinion here).

Chatbots

The word chatbot sounds super outdated, and in a way, from a technology standpoint, it is. It’s a very basic conversation model that literally follows a script and can’t go off it.

Some people call ChatGPT, Claude, Gemini and the gang chatbots, but I see them as something more advanced.

Personally, I use the word chatbot for those automated systems, like the customer service ones that follow a script and are very easy to hate.

Assistants

This is where assistants and copilots come in. The ones we use every day: Claude, ChatGPT, Copilot, etc. These are AI systems that work with you, suggest things, you have conversations with them, and they help you create documents, code, or content.

While they are very advanced, useful, and improve our productivity by 1294.37% (it’s true, that data comes from a Stanford study... a chatbot told me :P), you still have to tell assistants what to do.

Automations / RPA

If you’re wondering what RPA is, it stands for Robotic Process Automation. It’s nothing more than using software robots to automate actions a human would do on a screen (clicking, filling in data, copy/paste, etc). They’re used to speed up and improve the consistency of repetitive (and boring) tasks that we humans do.

An automation is something much more general. It’s about using software or AI to execute processes without human intervention.

These technologies are great for performing tasks and processes that are very structured and don’t change. In the real world, many of these systems combine both and use automations for repetitive tasks and agents for complex decisions.

But keep in mind that the fundamental difference still holds. An automation will execute the rules and conditionals you programmed (which can be super complex), an agent decides on its own what to do.

Agents don’t (always) work alone

I may have given you the idea that an agent always works alone, but that’s not the case. We’re increasingly seeing how agents can work with other agents.

Imagine a group of agents working toward a common mission. It reminds me of Mission: Impossible.

What? Were you expecting Tom Cruise? This is the original cast from the TV show!!! Each agent had special skills that allowed the whole group to take on missions that would be impossible for a single agent.

Well... there’s James Bond, but he’s the exception.

Don’t tell me you were expecting a different Bond? That’s not up for debate. Connery is James Bond. Period.

Sorry I got distracted… let’s keep going. I was about to tell you that agents can work with other agents.

Multi-agent collaboration

It’s exactly what you’re imagining. Several agents, each with their own capabilities and tools, working together toward a common goal (Mission: Impossible style).

This makes all the sense in the world. Instead of having one single agent that does everything, we have several agents where each one focuses on solving one part of the problem. That way, each agent can have its own goals, cycle, memory, and tools designed specifically for its mission and on top of that... agents can communicate with each other!!!

So we have a group of agents that share a common goal, each with special skills, that can talk to each other and share information.

And the advantage here is not just having specialized agents. We can also put many agents together to solve a big problem, or we can have some agents review and verify the work of others. The possibilities are endless.

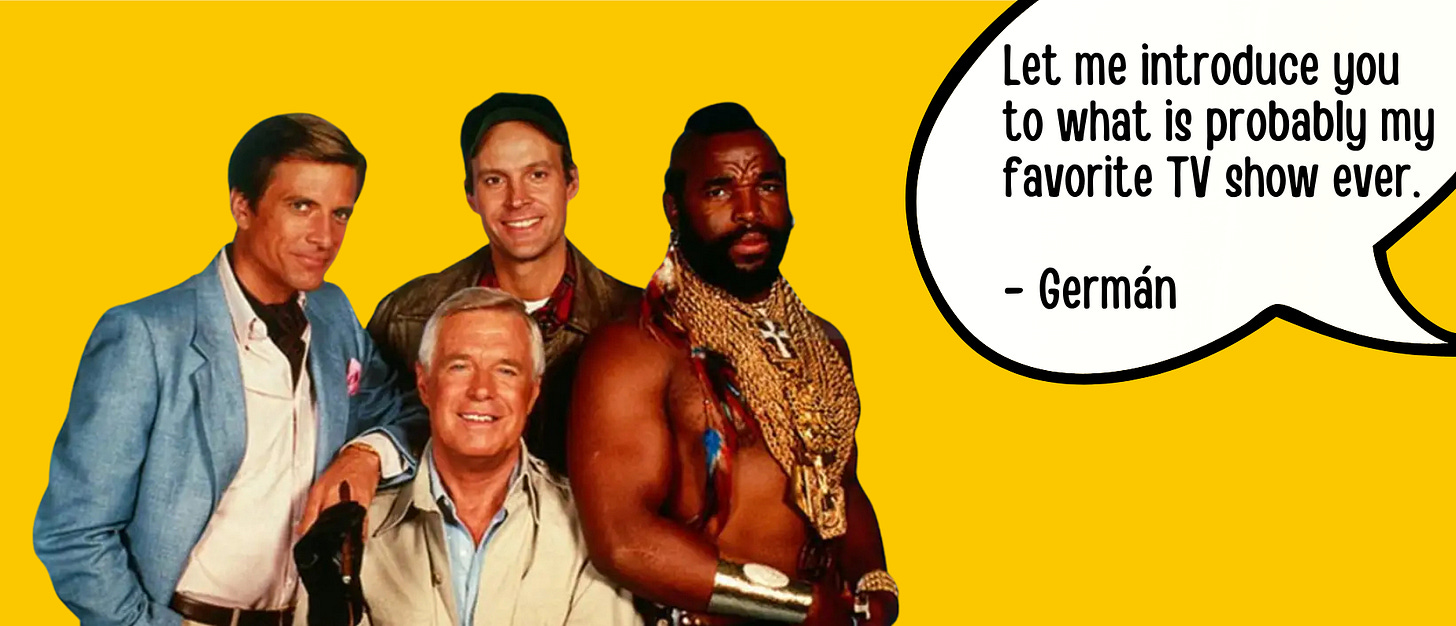

On top of that, these agents can be organized in different ways. Sometimes there’s even a coordinator agent, a sort of orchestra conductor, a leader... a John ‘Hannibal’ Smith of the digital world.

I’m sure you’ve seen The A-Team, and no, Liam Neeson and Bradley Cooper are not in this one. I went off on a tangent again. Sorry, I’ll try to stop with the old TV show references.

Where we are

Coordinating a group of agents is a complex task. They need to be able to communicate, avoid conflicts, divide the work properly, figure out what to do when they have contradicting information, and so many other things I can’t even imagine.

These multi-agent systems already exist and are a very active area of research and technological development. Universities, startups, and billion-dollar companies are working on how a group of agents can work together, plan complex tasks, and even correct each other. And all of this is happening as we speak.

This kind of research is necessary because most of the problems humanity is trying to solve are so big that a single agent just isn’t enough.

I can’t wait to see what this technology does in the next five years.

Hasta la vista, baby

If you’re reading this, thanks for making it this far. I know it was a long post 😬

Now you’ll not only be able to show off your AI agents knowledge at your next family dinner, you’ll also be able to tell when something is real and when it’s hype from the marketing team (or the CEO) of an AI company.

It’s a strange moment in the history of technology, and I’m sure what I’m about to say is a cliché, but it feels like we’re living in a sci-fi movie.

In the coming years we’re going to see how some of these agents will be incredibly useful and how others will fail miserably, with consequences we can’t predict today.

I think that’s partly why I write this blog, because we’re using a technology every day that we don’t fully understand, and we keep giving it more capabilities and power. And, sci-fi jokes aside, I’ve never liked giving control to something I don’t understand.

But mainly I write because if there’s something I’m passionate about, it’s learning. I want to deeply understand how AI works (and be able to explain it to you, in my own way), not just to use it better, but to use it consciously.

And, let me add…

Note:

Lately it's impossible to open LinkedIn without running into a post about AI agents. Forgive the skepticism, but in a couple of months roughly 135,674 new experts in this technology have appeared.

This final note is something I had to write, a kind of catharsis. Thanks for reading it.

Since I don’t like ending a post on that note, let me introduce you to one last agent. 😅 Agent 86 from Get Smart. (You thought this was over with The A-Team? Ha!).

Just to be clear, this is Don Adams (not Steve Carell).

This show does go way back, it’s from 1965. I used to watch reruns of reruns in the 80s and early 90s with my dad, and we’d crack up laughing together. Thinking about that brought back some really nice memories.

Here’s a mini-preview, in case you’re curious.

Alright, that’s it for real now.

See you soon!

G