How much energy does AI really use?

The electric bill nobody's showing you.

Watts are a way to measure how much energy is consumed per second. You probably know that each LED bulb in your house uses about 10 Watts, your laptop about 65 Watts, your microwave about 1,000 Watts, and so on. I’m even willing to bet you know that time travel requires exactly 1.21 GigaWatts and a DeLorean. (1 GigaWatt = 1,000,000,000 Watts 😱)

The uncomfortable truth? We don’t actually know how many GigaWatts have been used to train AI models, or how many are being consumed while we chat with them.

Why don’t we know? Because AI companies keep it a secret, and that lack of transparency is just the beginning of this story.

The water and electric bills

Training GPT-4 is believed to have cost over a hundred million dollars and consumed more than 50 GigaWatt-hours of energy. Enough to power San Francisco for three days (or make 40 trips through time). And that’s just the training — meaning: teaching it how to answer.

But training is only the beginning. Once trained, you need to keep ChatGPT running for 700 million users making over a billion queries a day. Oh, I almost forgot. ChatGPT isn’t alone — you need to add its buddies Gemini, Claude, Midjourney, Copilot, and the whole crew.

All these models live comfortably housed in data centers that run 24 hours a day and generate heat — a lot, and I mean a LOT of heat. And don’t think they cool down with a household fan. These beasts need water… a whole lot of water.

And when I say “a whole lot of water,” I mean that many of these data centers consume millions of liters of drinking water per day, just to stay cool and answer our questions.

When AI comes to town

Most people know there’s an energy problem. Some also know about the water problem. When I was researching for this post, I found another issue — one I honestly hadn’t thought about (as obvious as it may be).

These data centers, with their brutal consumption of energy and water, have to be physically located somewhere — and not necessarily far from you.

A proposal that’s hard to turn down

These companies are looking for cheap and reliable energy, water, good internet connectivity, and governments willing to offer them tax incentives.

In return, they show up with promises that seem to solve all of a city’s problems. Billions of dollars for the local economy, jobs for thousands of people, massive investments…

That all sounds great, but there’s one teeny-tiny problem. When a city offers tax benefits, it’s not just incentivizing one company to build its data center there — it’s creating an incentive for any company to set up shop.

What happens over time is that a cluster forms — meaning several companies take advantage of these benefits and end up building multiple data centers operating simultaneously in one city, competing for energy and water with all the people who live there.

It all sounds great until…

…you notice your electric bill is a little higher (or until the blackouts start).

Here’s the thing: when these data centers start operating in a city, they use as much energy as hundreds of thousands of families. Imagine that overnight, a city’s energy demand triples.

To handle this new demand, you need more power plants, more substations, more electrical cables running everywhere — in short… more infrastructure.

Sure, AI companies pay a ton of money, but they get discounted bulk rates, so guess who ends up paying for all this new infrastructure?

You. Yes, you. Or rather, the people who live in those cities.

To make this a little more personal, let’s stop talking about “the people who live in those cities” and give this person a name: Juan.

Juan is someone who just wants to live in peace, until one day — boom! — he finds out there’s a data center in his city…

Juan will probably start noticing that his electric bill keeps going up. Studies suggest he could end up paying 25% more.

Another important detail: energy demand is growing faster than power plants can generate it, so at some point, someone might have to choose between keeping the data centers running and… keeping Juan’s lights on.

But that’s not all — let’s not forget about the water. These data centers have an enormous thirst, using millions of liters of drinking water per day just to keep from overheating.

From what I’ve found, 80% of the water used to cool data centers evaporates, and the remaining 20% goes into the city’s water treatment system — which probably isn’t equipped to handle that volume.

And on top of all this, did anyone remember climate change? When droughts come (and they’re getting more frequent and longer), communities will be competing for drinking water.

So between the billion-dollar corporations that want to cool their data centers to process memes… I mean, their AI needs… and poor Juan — who do you think is going to win?

Noise, heat, and cables everywhere

As if waiting to run out of electricity and water wasn’t enough, Juan has other problems.

He suddenly discovers that these data centers — buildings the size of football stadiums that run 24 hours a day, 7 days a week, 365 days a year — emit a hum that never stops. This comes from their cooling systems, which keep the servers from melting, and it can be heard from several blocks away, all day, every day.

And that’s nothing. On top of the constant humming, there’s the heat. All these cooling systems have to dump that heat somewhere, right? Well, walking near a data center in summer can make Juan feel like he’s being slow-cooked.

Remember when I told you there wasn’t enough infrastructure to handle the energy demand? Well, part of the “solution” is more substations, electrical cables hanging everywhere, and of course, maintenance trucks driving through the neighborhood at all hours.

With all of this, many are starting to rethink what it means to have these massive data centers. For example, Amsterdam has stopped accepting new data centers because it was running out of land for housing and space for the electrical grid. Then there’s Ireland, where data centers already consume 21% of the country’s energy. Tech companies like Amazon, Microsoft, and Google have also been criticized for placing data centers in countries with water scarcity.

What’s clear to me is that this conversation is just getting started, and as always, the consequences will probably go far beyond what we can imagine. Sorry, Juan.

What AI companies won’t tell you

After seeing everything that happens to Juan, I’m guessing you’re wondering how much energy we use when we talk to AI.

Let’s try something… let’s see if we can calculate the energy used in a conversation with ChatGPT.

A simple query probably uses about 0.5 watt-hours. If your conversation with AI goes 20 back-and-forths, we’re talking about 10 watts. What if we add some image generation? You could be looking at 25-35 watts.

If you’re like me, that won’t be your only conversation of the day… and that’s how more than 700 million people use this technology every single day. Doing a more precise calculation, I’d say ChatGPT’s total consumption must be pretty close to a bazillion GigaWatts.

I wish I had a more precise number, but the truth is — like almost everyone when it comes to calculating how much energy AI uses — I’m guessing.

For example, MIT researchers have to make do by measuring open-source AI models to try to estimate how much commercial models like ChatGPT consume, because OpenAI (along with every other AI company) doesn’t want to share their real consumption numbers.

OpenAI won’t tell us how many watts a ChatGPT query really uses. Google won’t tell us how much energy Gemini needs to answer a question. My mamama (my grandmother) won’t give you her recipe for seco de cabrito con frejoles, arroz y tamalito verde (a legendary Peruvian stew — trust me, it’s worth fighting for). Microsoft won’t tell us how much energy its AI data centers use.

These companies treat their energy consumption data like Coca-Cola treats its secret formula or KFC treats its 11 herbs and spices recipe — except here, the stakes are much higher.

The same goes for water usage — we don’t know how that consumption will grow. I imagine (exaggerating a little) that this will end in a war over water, Mad Max style.

How does a country project how much energy it'll need by 2030 without that data? And what about environmental regulators? How can they create policies if they don't have this information? They can't. Period.

Where is all this headed?

Back to the Future, The Godfather, Mad Max… sorry if I went a little overboard with the movie references — I couldn’t help myself.

AI, the way it works today, consumes brutal amounts of energy. And from what I can see, in the future it’s going to consume a lot more.

Reasoning models consume 43 times more than a standard model. On top of that, every AI company is now saying the future is “AI agents” — which will work for hours researching, analyzing, coding, shopping online, even looking up the best recipes for mondongo (a traditional Latin American tripe soup — don’t knock it till you try it)… all while continuing to consume water and energy.

And speaking of all this — do you know how much energy our brain consumes?

20 Watts.

Yes. Your brain consumes 20 #@&!ing Watts.

Twenty, veinte, vingt, venti, vinte, zwanzig, twintig, tjugo, двадцать, 二十, 二十, 스물, 이십, עשרים, عشرون, yirmi, बीस, είκοσι, viginti… #@&!ing Watts.

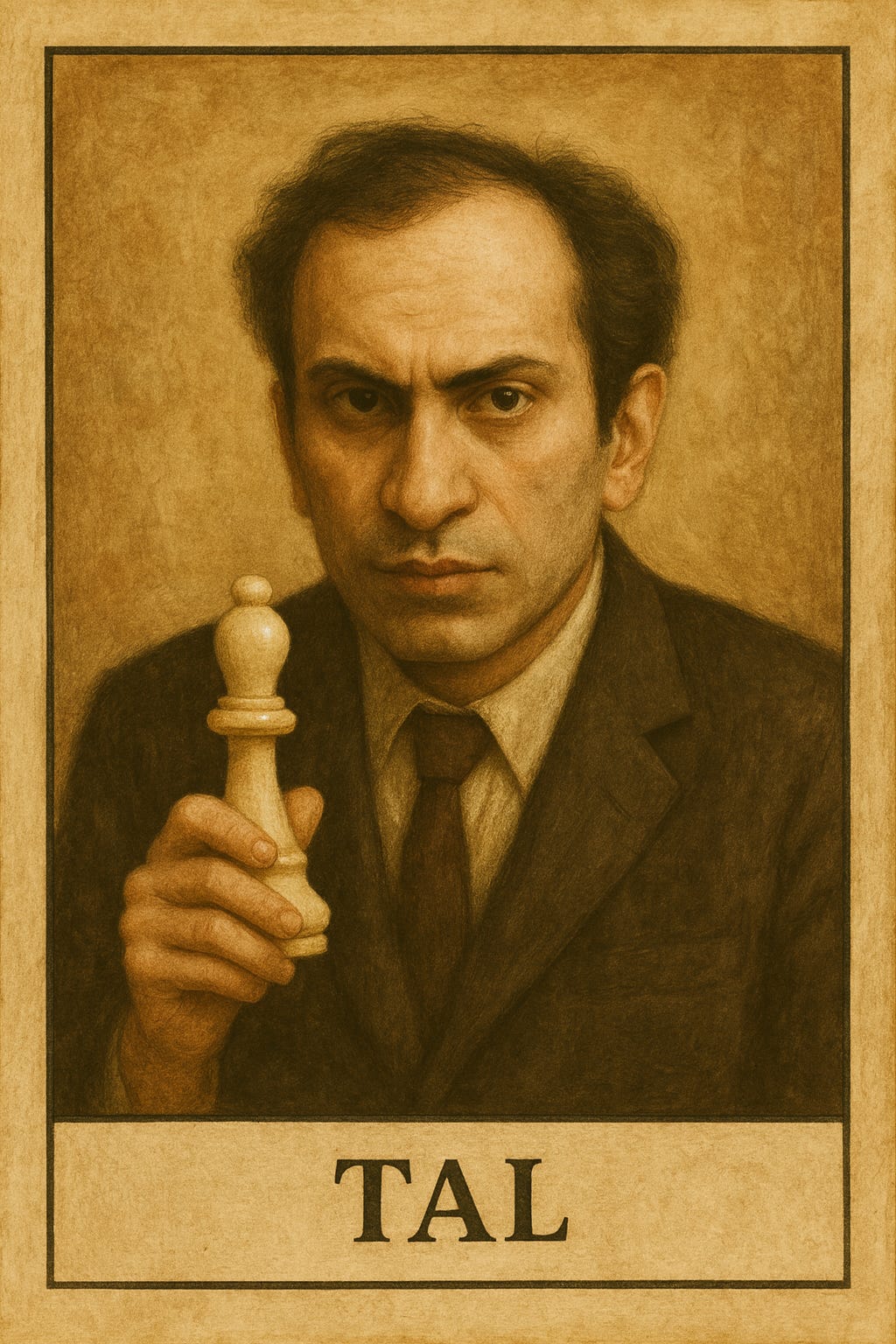

To put it another way: it doesn’t matter if you’re playing chess against Mikhail Tal, reading this post, or watching that movie you know by heart because you’ve seen it 7,567 times — your brain will use approximately the same: 20 #!%&ing Watts.

The human brain is a marvel of efficiency. Let me do one last calculation…

Imagine a university with 10,000 students. Those ten thousand brains learning, analyzing, researching, creating… consume only 0.2 GigaWatts. And they don’t need power plants — all they need is ramen noodles and caffeine (plus pizza, and beer).

How do you like THAT, AI? 😘

See you in the next post,

G

Off topic: If you want to collect chess player trading cards, I posted Kasparov’s in another article.

Hey! I'm Germán, and I write about AI in both English and Spanish. This article was first published in Spanish in my newsletter AprendiendoIA, and I've adapted it for my English-speaking friends at My AI Journey. My mission is simple: helping you understand and leverage AI, regardless of your technical background or preferred language.

Wow, that's pretty scary. Thank-you for your attention to this topic and to sharing it with us. It is already such an easy way to find answers that we simply don't even think of the cost of a little quick question. Just wondering, is GPS also built using AI processes? I'm curious to know how much of what we already use regularly is actually AI with an older name. And/or how much AI has reached into supporting those kind of now standard processes? And, if so, what strategies can we use to limit how much we are actually using AI unwittingly? So much to think about and so much that the companies do not want us to think about!!