When an AI tells you you're not human

How AI detectors work (and why we can't trust them)

“This text was generated by AI” is what our professor told us, while highlighting a couple of paragraphs that I had written for a university group assignment.

Technically, what the professor was telling us was that Turnitin (an academic review tool) had flagged those paragraphs as probably written by AI.

Imagine my shock. There I was, in front of my classmates, trying to explain why those paragraphs, which I wrote with care and dedication were being treated as machine-made. How do you even prove that you actually wrote something?

Luckily, I know a thing or two about how AI works 😉, and we ended up having a super interesting conversation about these detection tools, how they work, and why they’re not 100% reliable.

Then I started to think:

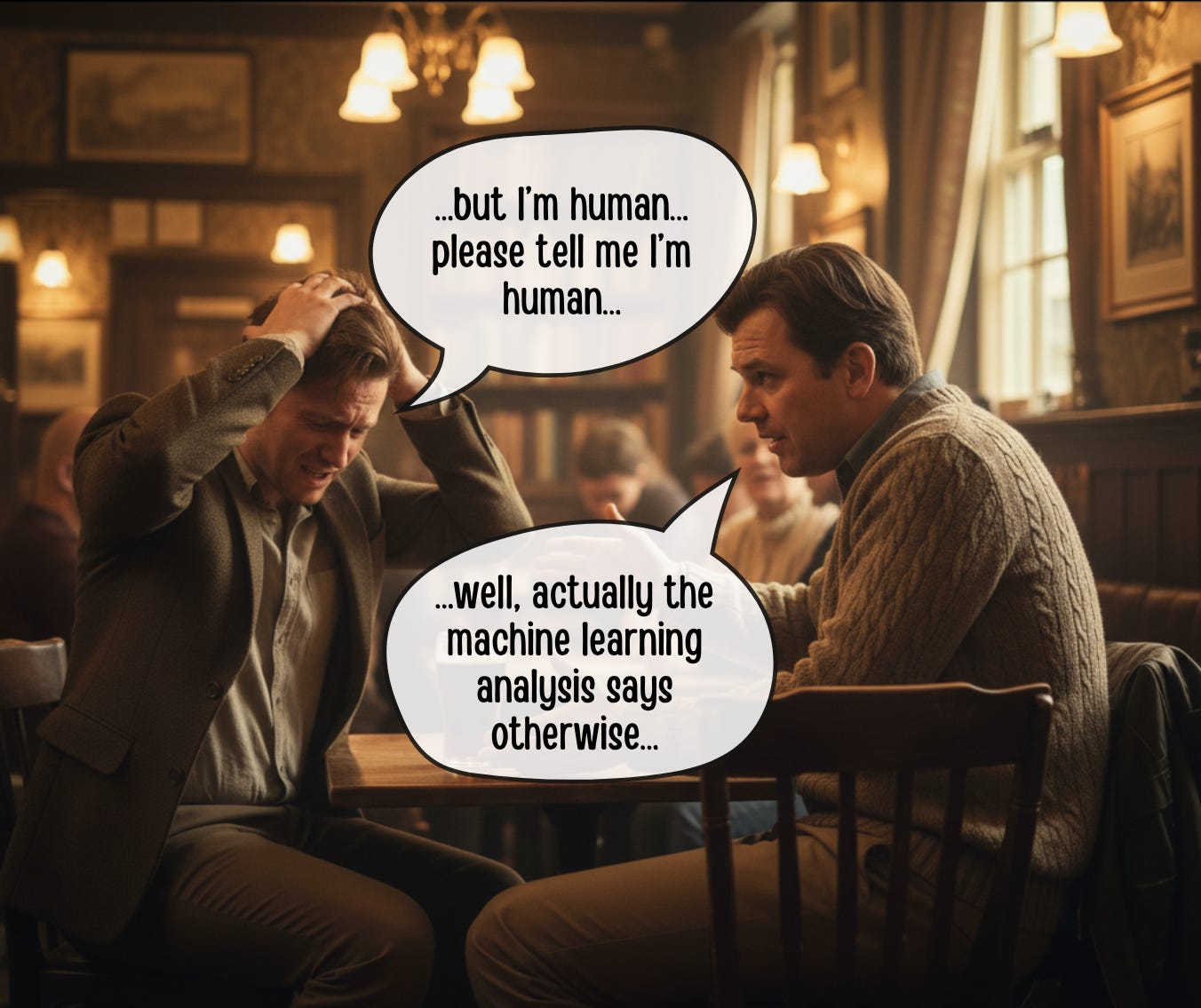

I had been accused by a machine of not being human.

So I went down the rabbit hole to understand how these detectors actually work, how they analyze text, and how reliable they really are. And that’s what I want to talk about today.

Note: Wait, university?

Yep. For the past couple of years I’ve been studying Economics Engineering. What can I say, I’m 46 and I never stop learning new things :)

Back to the topic:

I had been accused by a machine of not being human.

Can AI-generated text be detected?

it’s complicated

All of our AI assistants have been trained on millions and millions of human-written texts with the only purpose of imitating the way we write. That’s literally their job. On top of that, every new version of ChatGPT, Claude, Gemini, etc., writes in a more “human-like” way, which makes me wonder if we can really detect whether something was written by a machine or a person.

There’s a whole industry around this. Universities and companies are looking for ways to identify AI-generated content, so many millions have been invested in building solutions that try to detect it. I did some reading and found five main methods used to try to solve this problem.

Let me spoil the surprise by telling you that none of these methods are 100% reliable, and that’s exactly why it is important to understand how they work.

Perplexity

I’m not talking about the search engine. When I say perplexity, I’m talking about a way to measure how “predictable” or “surprising” a text is for an LLM. This is the most common method used to try to detect whether a text was written by AI or by a human.

For example, imagine I ask you to complete this sentence:

“The sky is ________”

I bet the first thing that came to mind was “blue,” which is the most common continuation and the one a language model would write. That means it has low perplexity, or in plain English, “that surprises no one.”

I asked ChatGPT and it gave me these answers:

“The sky is blue”

“The sky is infinite”

“The sky is beautiful”

“The sky is the limit”

All pretty predictable, so according to this method, they were more likely written by a machine.

But what if the answers were:

“The sky is a government invention to hide the aliens”

“The sky is eating cau cau in bed while binge-watching Netflix” (don’t judge me)

(BTW. Cau cau is a traditional Peruvian tripe and potato stew, and my favorite dish in the whole world, and yes I said tripe).

Let’s say those are a tiny bit harder to predict, meaning they have high perplexity.

Some detectors use this method because AI-generated texts tend to have low perplexity. AI usually picks the “safest” words when writing, which makes it predictable. Humans are supposed to be less structured, some more than others :P, and therefore less predictable.

The problem with this method is that clear, direct, well-structured texts (you know, the way we're supposed to write) are more predictable than when, I don't know, you include cau cau, 80s TV shows, and other random stuff in your documents.

So the better you write, the more likely they’ll think you’re a robot.

But perplexity isn’t just about words. These tools also measure how the structure of your sentences changes, and that’s called “burstiness.”

Humans write varied sentences. Maybe you start with a complex one, then a simple one, then a joke... you get me. Robots tend to have a more uniform (and predictable) structure.

So these tools use both factors to try to decide how likely it is that a text was written by AI. Does it work? Sometimes. Is it reliable? Nope.

Style analysis

Each one of us (including machines) has a way of writing. The analysis of these writing styles is called stylometry. Think of it as the fingerprint of our texts.

For example, check out these short phrases criticizing AI detectors, each from a different author:

“AI detectors are fundamentally unreliable tools that often unfairly penalize legitimate human writing due to their high false positive rates.” (Gemini)

“AI detectors fail too often to be considered reliable tools.” (ChatGPT)

“AI detectors are notoriously inaccurate, unfairly penalizing students and professionals with false positives while the real cheaters easily learn to evade them.” (Claude)

“[outraged] Now machines are the ones telling us if we’re humans! Don’t believe them [/outraged]” (Germán)

All of these phrases say more or less the same thing, but each one has its own style, and this analysis looks for patterns in writing. How different are the sentences in the text, how many common words are used, what the punctuation looks like, etc.

If you’re wondering how style can be analyzed, I found a really interesting paper: “Distinguishing AI-Generated and Human-Written Text Through Psycholinguistic Analysis“ that identifies 29 characteristics.

Here are the six categories of those characteristics:

Lexical: measure vocabulary and word variety.

Syntactic: analyze sentence structure and complexity.

Sentiment and subjectivity: measure the emotion and opinion in the text.

Readability: measure how easy it is to read and understand the text.

Named entities: analyze references to people, places, dates, etc.

Uniqueness and variety: evaluate how original the text is and whether it uses different structures.

Detectors look for patterns that seem “suspicious” to them.

For example: some look for the use of certain punctuation marks (did someone say em dash “—”?), others look for words that are too sophisticated or too simple; they also check if sentences are roughly the same length.

One of the criticisms of this method is that it can be unfair to non-native speakers (like myself), who tend to write in a more direct, simple style that matches some of the “suspicious patterns” these AI detectors flag.

Machine learning

What if we use AI to detect AI? Great idea! using AI to tell us if something is human... I’m pretty sure that’s how Terminator started.

To do this, we train machine learning models with thousands and thousands of texts, each one labeled as written by a human or a machine. With that training, the model learns to recognize the patterns of each, and when it’s done, the model is ready to try to classify any text you give it and tell you whether it thinks it was written by a human or an AI (and I cannot stress the word try enough).

Sounds good, right?

Well, the reality is that it doesn’t work that well. For example, in 2023 OpenAI launched a classifier that could correctly identify AI-generated text only 26% of the time. And to make things even uglier, it told 9% of flesh-and-blood humans that their texts were AI-generated. This tool worked so poorly that they ended up shutting it down after just a few months.

There are other tools, but they all suffer from the same issues. They need a minimum amount (around 20%) of AI-generated content in a document to classify it correctly. They’re also designed to try to avoid accusing a human of being a machine (even though it happened to me), and this means they let a lot of AI-generated content slip through.

On top of all that, there’s a deeper problem. This technology only works for detecting the type of text it was trained on. Meaning, if we train a detector with texts created by GPT-3, there’s no guarantee it will still work with GPT-4. And if you train it with English content, it might fail in Spanish. And (as you can imagine) every time a new model comes out, these tools become obsolete.

And what about trying…

Watermarks

Yes, watermarks... like on bills. You still remember paper money, right?

Maybe today you only use digital wallets and cash is a thing of the past. Although there’s something special about carrying cash (especially when your phone runs out of battery).

Anyway, it turns out that to prevent people from counterfeiting bills, someone invented something called watermarks. They’re designs added to the paper during manufacturing that you can only see when you hold them up to the light.

Well, that’s exactly what AI companies can do: mark their texts at the factory.

Meaning, they can include an “invisible signature” in the text they generate. To do this, their language models have to write in a specific way that can’t be detected by a human reading the text, but can be detected by tools that know that specific writing pattern.

And this, in theory, is an almost perfect solution. If we had these watermarks in the text, you could know for sure whether it was written by a robot or a human. There’s just one small detail...

For this to work, all companies would need to add these watermarks to their language models. I find it very hard to believe that Google, OpenAI, Anthropic, Meta, Mistral, DeepSeek, Alibaba, etc., etc., would all agree and implement it. And let’s not even talk about open source language models.

Note: I imagine that for many of these companies, it’s not very convenient for people to easily detect that a text was written by an LLM.

Semantic analysis

This method is pretty interesting. In simple terms, it’s a way of asking an AI if it was the one who wrote something.

Basically, you take a text, try to figure out which LLM could have written it, and then use that same LLM to see how likely it is that it’s the author.

How do you do that? you might ask.

You generate small variations of the text and check if the AI is more likely to have written any of the variations than the original. If ChatGPT says all variations are less likely than the original version, it’s almost certain the original was written by ChatGPT. Make sense?

Let me give you an example. Say you want to check if this text is from a human or an AI:

“I love callos a la madrileña, but I prefer cau cau.”

(callos a la madrileña is a traditional Spanish tripe stew, and cau cau is its Peruvian cousin. Yes, I have strong opinions about tripe stews.)

The first thing I need to figure out is which of all the AIs could have generated this text. Let’s say I pick ChatGPT.

OK, so now I need to create some variations and “ask” ChatGPT how likely it is that it wrote all of them (plus the original).

Let’s say I give it the original and some variations and ChatGPT gives me the probability of having written each one:

“I love callos a la madrileña, but I prefer cau cau.” (prob: 70%)

“I’m fascinated by callos a la madrileña, although I prefer cau cau.” (prob: 85%)

“I’m enthusiastic about callos a la madrileña, but I lean towards cau cau.” (prob: 72%)

“I’m seduced by callos a la madrileña, but I hold a greater predilection for cau cau.” (prob: 25%)

See how some variations are more likely than the original text I want to compare? With this, I could say it’s unlikely that this text was written by ChatGPT.

But, now I’d have to test with the gazillion other LLMs out there. Can you imagine doing this whole process for each one of them? Where I come from we call that “trabajo de hormiga” (ant’s work, meaning painfully tedious). And let’s not even talk about how much running all those tests would cost.

Note: Of course, I’m oversimplifying the process and the math behind this. If you want to learn more, check out the paper: “DetectGPT: Zero-Shot Machine-Generated Text Detection using Probability Curvature“

What happens when you write in Spanish?

Turns out all the methods I just showed you work even worse in Spanish.

This is because all these detectors have been trained primarily with English texts, probably 80% of all their training data. The remaining 20% is for all the other languages in the world... so yeah, I’m screwed.

To give you an idea, when OpenAI launched their detection tool they literally said “We recommend using the classifier only for English text. Its performance is significantly worse in other languages.”

Sí señor.

Are we really letting machines decide if we’re human?

Think about it for a second. We trained artificial intelligence using millions and millions of human-written texts until we finally got a machine to write like us. Now, we can no longer tell the difference between text written by robots and by human beings, and that’s a problem.

So, to solve this problem, we’re building new machines so that they can analyze the text and tell us whether it was created by humans or not. Are you sure that’s what we want? Don’t you think we’re giving Skynet too much power?

As for me, I hope I don’t get accused of being an AI again in the future ;)

Best,

G (the human)